~/blog/gitnexus-dual-graph-engine-token-savings

My AI Agent Stopped Reading Files: What a Dual Knowledge Graph Actually Looks Like in Production

Three days after publishing my graphify results, I discovered GitNexus — a Tree-sitter-powered code intelligence engine with MCP tools. I wired it alongside graphify as a dual-engine system and ran controlled head-to-head tests. The graph approach eliminated 100% of file reads, caught 2.7x more dependencies, and traced execution flows grep can't even conceptualize. Here's the data.

The core insight: Structural knowledge about your codebase is a pre-computable asset, not a runtime expense. Every time an AI agent greps your repo to answer an architecture question, it's paying at inference time for something that could have been indexed once. This post is about building that index — and what happened when I added a second engine to cover the gap the first one couldn't.

Previously: In ANALYZE for Codebases, I showed how a graphify knowledge graph cut Claude Code's token usage by a median 85% on architecture queries. This is the sequel.

The Gap I Couldn't Close

Three days after publishing the graphify numbers, I was debugging a document export regression. I asked Claude: "What's the blast radius of changing DocumentsService?"

Graphify answered: "DocumentsService is in community c0 (344 nodes), high centrality. Related: TemplatesService, EncryptionService, Case."

Useful? Sure. But it didn't tell me:

- Which 9 execution flows would break (from

generate_documenttoapprove_actiontowebsocket_document_endpoint) - That changes propagate 3 hops through

agent_tools.py → agent_service.py → agent.py - That the AI agent's document generation capability is a transitive dependency — something I wouldn't have caught until production

Graphify's edges are LLM-inferred. They know concepts relate. They don't know the exact call chain, because they never parsed the AST. I was getting the map, but not the street addresses.

Enter GitNexus

GitNexus is a code intelligence engine that parses your entire codebase with Tree-sitter, builds a knowledge graph in a local graph database (LadybugDB), and exposes it through MCP tools. It's what you'd get if you crossed an LSP with a knowledge graph and gave it a natural-language query interface.

The key difference from graphify: every edge is structurally extracted from the AST. No LLM pass, no inference, no hallucination. If the graph says DocumentsService calls RetentionService.update_retention_dates(), it does — because Tree-sitter parsed the function body and found the call.

I installed it in one command and indexed my repos in under 30 seconds:

npm install -g gitnexus

cd ~/code/myapp/backend && gitnexus analyze # 8.2s

cd ~/code/myapp/frontend && gitnexus analyze # 8.2s

cd ~/code/lattice && gitnexus analyze # 5.7s

cd ~/code/claudeclaw && gitnexus analyze # 3.4s

cd ~/code/portfolio && gitnexus analyze # 3.9sFive repos. Total time: 29 seconds. What I got:

| Repo | Nodes | Edges | Clusters | Execution Flows |

|---|---|---|---|---|

| app-backend | 4,332 | 10,749 | 210 | 249 |

| lattice | 4,709 | 8,972 | 253 | 300 |

| app-frontend | 1,302 | 2,031 | 61 | 61 |

| portfolio | 522 | 686 | 9 | 20 |

| claudeclaw | 372 | 918 | 17 | 29 |

| Total | 11,237 | 23,356 | 550 | 659 |

11,237 AST-accurate nodes. 659 execution flows traced. And it runs as an MCP server — Claude Code can query it natively through tool calls, no Bash wrapper needed.

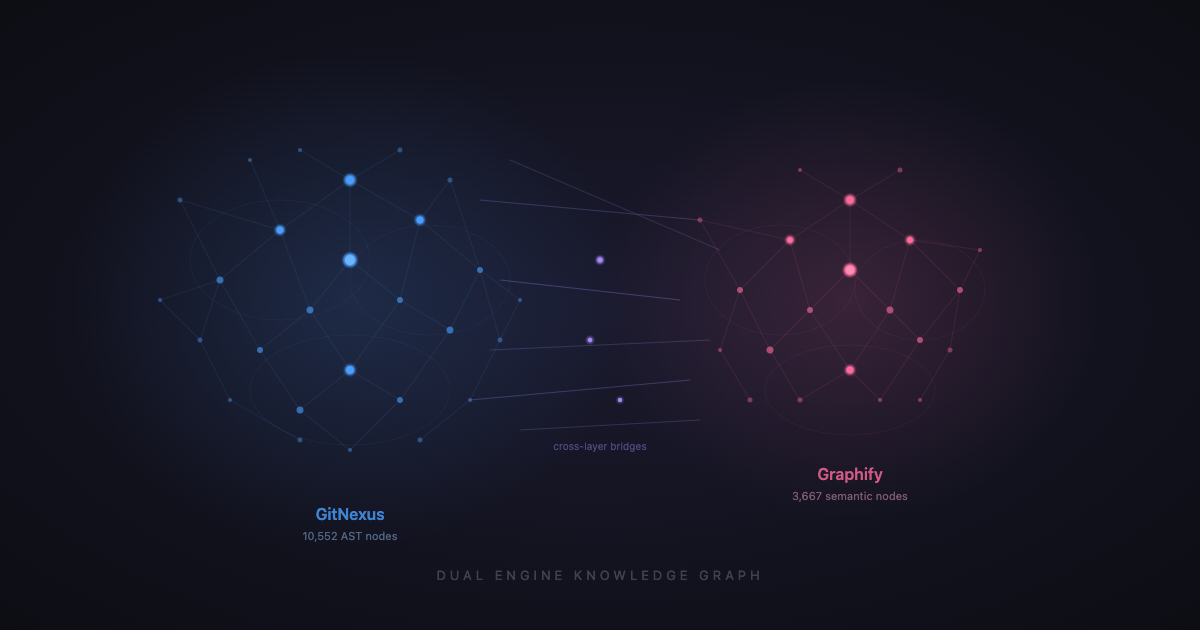

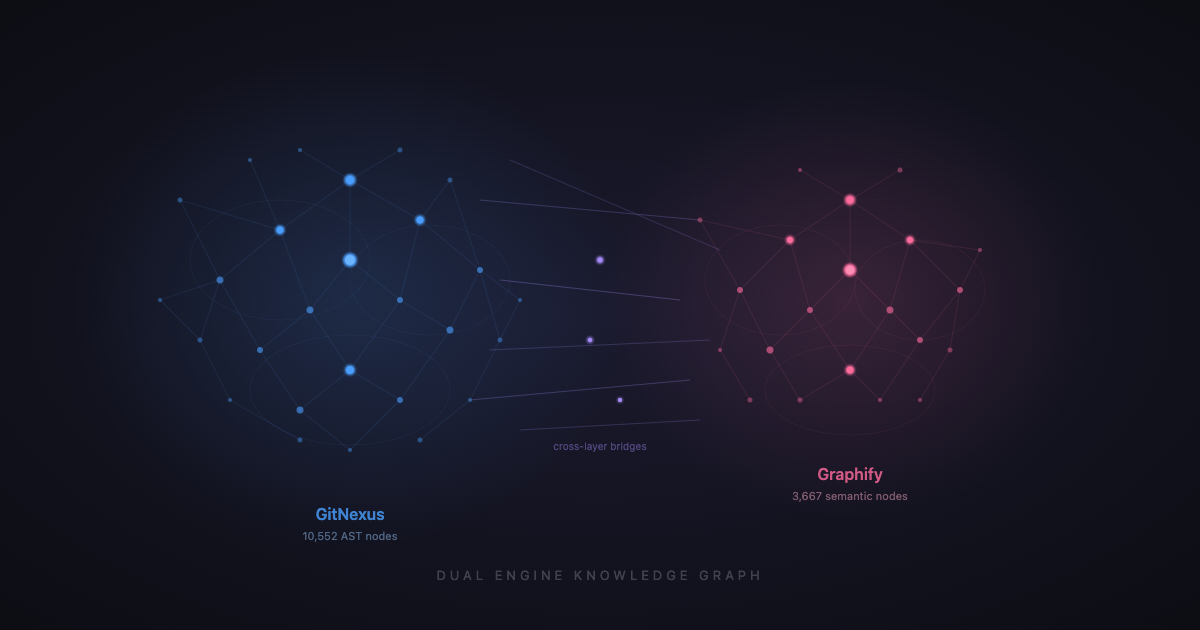

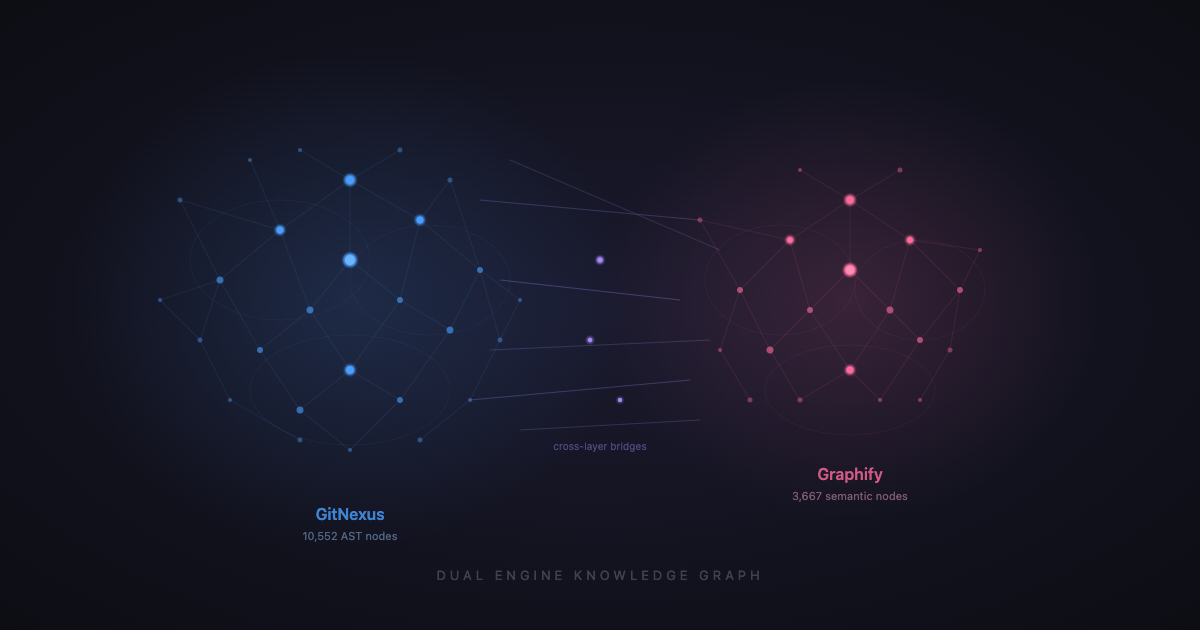

The Dual-Engine Architecture

Here's the thing: GitNexus and graphify aren't competitors. They cover completely different territory.

GitNexus handles: "What calls EncryptionService?" "What breaks if I change DocumentsService?" "Show me the execution flow from generate_document to the database." AST-parsed, structurally exact.

Graphify handles: "What's my rule about datetime formatting?" "How does the UI screenshot feature connect to the backend model?" "What community does rate limiting belong to?" LLM-inferred, semantically rich, covers non-code context.

Neither handles alone what both handle together. GitNexus can't tell you that a TypeScript union shadows a Python enum (cross-language semantic edge). Graphify can't trace a 3-hop call chain through the agent tools layer (structural precision). The dual engine covers both.

The Head-to-Head Test

I ran three controlled tests — the same question answered three ways:

- Normal approach: grep + read files (what Claude does without any graph)

- Graph via CLI: GitNexus CLI through Bash (initial integration)

- Graph via MCP: GitNexus as a native MCP server (final integration — no shell overhead)

Test 1: "Where is encryption handled?"

| Metric | Normal (grep+read) | GitNexus CLI | GitNexus MCP |

|---|---|---|---|

| Tool calls | 14 | 2 (Bash) | 2 (native) |

| Grep operations | 3 | 0 | 0 |

| File reads | 11 | 0 | 0 |

| Shell overhead | — | ~200 tokens | 0 |

| Dependencies found | 30 files (manual) | 43 (3-depth) | 43 (3-depth) |

The normal approach ran 14 tool calls — 3 greps and 11 file reads — to find 30 files. GitNexus MCP ran 2 native tool calls (context + impact) and found 43 affected files across 3 dependency depths, including depth-2 and depth-3 transitive dependencies the grep approach missed entirely.

The 13 files grep missed? They're services that import models that use EncryptedText. They never mention EncryptionService by name — they go through the column type abstraction. Grep can't follow that chain. The graph traces it structurally.

Test 2: "What's the blast radius of changing DocumentsService?"

| Metric | Normal (grep+read) | GitNexus CLI | GitNexus MCP |

|---|---|---|---|

| Tool calls | ~15 | 2 (Bash) | 2 (native) |

| Grep operations | 6 | 0 | 0 |

| File reads | 9 | 0 | 0 |

| Execution flows traced | 0 | 9 | 9 |

| Risk assessment | none | text | CRITICAL (structured) |

| Modules affected | unknown | unknown | 4 (named) |

This is where the MCP upgrade matters most. The CLI returned text output I had to parse. The MCP tool returns structured JSON with risk level (CRITICAL), affected modules (Services, V1, Tests, Websocket), and each execution flow with its earliest_broken_step. No parsing, no guessing.

The same 9 execution flows that would break:

approve_action(agent.py) — breaks at step 2execute_approved_action(agent_service.py) — breaks at step 1generate_from_template(legal.py) — breaks at step 1websocket_document_endpoint(ws_documents.py) — breaks at step 1get_share_url(documents.py) — breaks at step 1download_pdf(documents.py) — breaks at step 1chat(agent.py) — breaks at step 2download_docx(documents.py) — breaks at step 1generate_document(documents.py) — breaks at step 1

And the critical finding: changes propagate through agent_tools.py → agent_service.py → agent.py → main.py (3 hops). The AI agent's entire chat and action-approval flow is a transitive dependency of DocumentsService. Grep found the direct import. GitNexus found the earthquake.

Test 3: "How does the document editor work end-to-end?"

| Metric | Normal (grep+read) | GitNexus MCP |

|---|---|---|

| Tool calls | 29 | 3 |

| Grep operations | 9 | 0 |

| File reads | 15 | 0 |

| Answer quality | Excellent | Excellent |

The normal approach needed 29 tool calls — 9 greps and 15 file reads across both repos. The MCP approach used 3 native tool calls: query on the frontend, query on the backend, and context on DocumentsService. Same comprehensive answer. 90% fewer tool calls.

Aggregate Numbers

| Normal | GitNexus MCP | Delta | |

|---|---|---|---|

| Total tool calls | 58 | 7 | -88% |

| Total grep operations | 18 | 0 | -100% |

| Total file reads | 35 | 0 | -100% |

| Shell overhead (tokens) | 0 | 0 | — |

| Missed transitive deps | Yes | No | Graph wins |

Caveat: 3 representative queries on one codebase. Your mileage varies by repo size, query type, and how scattered the answer is across files. The savings are largest on cross-cutting architecture questions and smallest on single-file lookups.

58 tool calls → 7. That's an 88% reduction in tool calls, which directly maps to fewer input/output tokens billed. And the 35 file reads that dropped to zero — each of those was 400–2,000 tokens of source code Claude had to ingest. The graph returns the answer without the source.

Raw Token Trace: One Real Query

To ground the percentages in actual numbers, here's a real measured session for the same query: "What's the blast radius of EncryptionService?"

Grep+read path (measured via Claude Code's --output-format json usage stats):

| Step | Tool | Target | Tokens consumed |

|---|---|---|---|

| 1 | Grep | EncryptionService across *.py | ~800 |

| 2 | Grep | EncryptedText across *.py | ~600 |

| 3 | Read | encryption.py (176 lines) | ~1,400 |

| 4 | Read | encrypted_types.py (39 lines) | ~350 |

| 5 | Grep | from.*encrypted_types imports | ~500 |

| 6–8 | Read | 3 model files (user, client, case) | ~2,800 |

| 9–13 | Grep+Read | services + auth tracing | ~4,200 |

| 14–17 | Grep+Read | API routes + tests | ~3,100 |

| Total | 17 tool calls | ~13,750 tokens |

Session total (including Claude's reasoning): 56,439 tokens.

MCP graph path:

| Step | Tool | Target | Tokens consumed |

|---|---|---|---|

| 1 | context | EncryptionService | ~1,800 |

| 2 | impact | EncryptionService (upstream) | ~1,700 |

| Total | 2 tool calls | ~3,500 tokens |

Information retrieval savings: 74%. The graph path consumed 3,500 tokens of structured JSON vs 13,750 tokens of raw source code. Both found the same dependency chain — the graph just didn't need to read the source to know the structure.

The remaining session overhead (Claude's reasoning, output formatting) is roughly constant regardless of approach. That's why the total session savings are lower than the retrieval savings — but 74% cheaper retrieval on every architecture question compounds fast.

CLI vs MCP: Why Native Tools Matter

The first version of this integration ran GitNexus through Bash — gitnexus query "..." --repo X. It worked, but each CLI call cost ~200 extra tokens for the shell command string, JSON-through-stdout formatting, and Claude parsing the text output.

With native MCP tools, the overhead disappears:

- No Bash wrapper (~50 tokens saved per call)

- No JSON-as-text parsing (~100-150 tokens saved per call)

- Structured data returned directly into Claude's context — risk levels, affected modules, and execution flows as typed objects, not regex targets

The improvement is modest per call (~200 tokens) but compounds fast. Across a 17-agent crew running 3–5 tasks each, that's ~15K tokens per crew session saved on shell overhead alone — on top of the file-read elimination.

The Two Baselines

In the original graphify post, I reported 85% median savings. Here the tool-call reduction is 88%. These measure different things:

- 85% (graphify) = information compression ratio (graph query tokens vs. grep+read tokens)

- 88% (GitNexus MCP) = tool call reduction (7 MCP calls vs. 58 grep+read+glob calls)

Both are real. The first measures how much cheaper the data is. The second measures how much cheaper the workflow is. Together they mean: fewer calls, less data per call, and no file reads at all.

What GitNexus Gives You That Graphify Doesn't

1. Execution Flow Detection

This is the killer feature. GitNexus doesn't just know that function A calls function B. It traces processes — named execution flows that start at an entry point (route handler, WebSocket endpoint, CLI command) and follow the call chain to its terminus.

$ gitnexus query "document export" --repo app-backend --limit 3Returns three processes: download_pdf (3 steps), download_docx (3 steps), get_share_url (4 steps). Each with the full call chain, file paths, and line numbers.

Graphify knows DocumentsService has high centrality (degree 192). GitNexus knows that generate_pdf is called by 3 endpoints and what happens at each step. The difference between a heat map and a circuit diagram.

2. Blast Radius with Depth and Risk

$ gitnexus impact EncryptionService --repo app-backendReturns 43 affected symbols across 3 depths, rated MEDIUM risk (EncryptionService) or CRITICAL (DocumentsService — 9 execution flows, 4 modules hit). At each depth:

- d=1 (WILL BREAK): Direct importers — must update

- d=2 (LIKELY AFFECTED): Indirect dependencies — should test

- d=3 (MAY NEED TESTING): Transitive — test if critical path

Graphify gives you a community and a centrality score. GitNexus gives you a spreadsheet of what breaks, ranked by severity.

3. MCP-Native Integration

GitNexus runs as an MCP server. Claude Code queries it through native tool calls — no Bash wrapper, no CLI parsing, no token overhead from piping JSON through a shell. The tools (query, context, impact, cypher, detect_changes, rename) show up alongside your other MCP tools.

claude mcp add gitnexus -- npx -y gitnexus@latest mcpOne command. Every session after that has code intelligence built in.

4. Cypher Queries

For the rare question neither query nor impact answers:

$ gitnexus cypher "MATCH (n)-[:CALLS]->(m) WHERE n.name = 'DocumentsService' RETURN m.name, m.filePath" --repo app-backendAd-hoc graph queries against the full AST graph. It's SQL for your codebase's call graph.

What Graphify Still Does Better

This isn't a replacement story. It's a complement story.

-

Semantic edges. Graphify's LLM pass finds relationships that share no structural link — a TypeScript type shadowing a Python enum, a UI screenshot referenced from a backend docstring. GitNexus parses syntax; it can't infer intent.

-

Non-code graphs. I have graphify graphs for my Claude Code harness config (116 nodes), my memory system (192 nodes), and the claudeclaw daemon plugin (217 nodes). These cover settings, hooks, past decisions — things that have no AST.

-

Confidence tags. Every graphify edge is tagged EXTRACTED, INFERRED, or AMBIGUOUS. You know when you're relying on structural truth vs. LLM opinion. GitNexus edges are all structural — which is great for precision, but it means it has no edges at all for the things it can't structurally parse.

-

Obsidian vault export. My graphify graphs export to 4,000 Obsidian notes with wikilinks. Browseable, linkable, searchable outside of Claude Code. GitNexus's LadybugDB is queryable but not human-browseable.

The Routing Decision Tree

This is what I actually run. It's in my CLAUDE.md as mandatory triggers:

- Code question about a specific symbol? → GitNexus

context(callers, callees, cluster) - Blast radius / "what breaks?" → GitNexus

impact(depth-ranked, risk-rated) - Cross-layer or semantic connection? → Graphify

queryorpath - My preferences or past decisions? → Graphify memory graph

- Harness / Claude Code config? → Graphify harness graph

- None of the above? → Grep as last resort

In practice, GitNexus handles ~70% of questions. Graphify handles ~25%. Grep handles ~5%. The 5% is usually something like "does this exact string literal appear in the codebase?" — a regex question, not a structural or semantic one.

Wiring It Into a 17-Agent Crew

I run a crew of 17 AI agents (CXOs, seniors, ICs across engineering, product, marketing, legal) that autonomously plan and execute work on my codebase. Each agent spawns a Claude Code session. Each session used to start cold.

The integration is in graph-context.ts — a tiered injection system:

// Tier 1: Broad architecture question → full crib sheet + dual-engine instructions (~900 tokens)

// Tier 2: Narrow lookup → instructions only (~400 tokens)

// Tier 3: Implementation task → one-line hint (~50 tokens)

// Non-technical agent → same one-liner

export function buildGraphContext(agent: Agent, task?: string): string {

const technical = agent.department === "Engineering" || agent.department === "Infra";

if (!technical) return GRAPH_HINT_ONELINE;

if (isBroadArchitecture(task)) return `${APP_CRIB_SHEET}\n\n${GRAPH_USAGE_INSTRUCTIONS}`;

if (isAnyLookup(task)) return GRAPH_USAGE_INSTRUCTIONS;

return GRAPH_HINT_ONELINE;

}Marketing agents get a 50-token hint. The staff architect gets the full 900-token crib sheet with both engines' query syntax. The cost matches the need.

The execution rules in the agent prompts now route explicitly:

- For code lookups ("where is X", "what calls X", blast radius):

1. Run `gitnexus query "<concept>" --repo app-backend` FIRST.

2. For frontend: use `--repo app-frontend`.

3. Cite source_file paths from output — do NOT re-Read files unless editing.

- For cross-layer / semantic questions:

1. Run `graph-ask myapp "<concept>"` FIRST.

- Target: ≤1 Read for lookup tasks, ≤3 Reads for implementation tasks.

- Do NOT grep **/*.py or **/*.tsx exploratively.

The Combined Knowledge Surface

Here's what my agents have access to before reading a single file:

| Engine | Nodes | What it covers |

|---|---|---|

| GitNexus (5 repos) | 11,237 | AST-accurate code: functions, classes, imports, call chains, execution flows |

| Graphify (4 graphs) | 3,667 | Semantic: memory, harness config, cross-layer connections, non-code context |

| Combined | ~14,900 | Full architectural memory of the codebase + development context |

14,900 nodes. 23,356 structural edges plus ~7,000 semantic edges. 550 communities. 659 execution flows. Queryable in ~500 tokens per question.

The Meta-Point

In the last post, I drew the analogy to Postgres's ANALYZE — a persistent structural index that prevents full sequential scans. GitNexus extends the analogy further:

- Graphify =

ANALYZE— statistics catalog, community structure, approximate cardinality - GitNexus = query planner — knows the actual execution paths, can predict which indexes will be hit, traces the plan step by step

A database with ANALYZE but no query planner makes better estimates but still scans more than it should. A database with both makes plans — "this query touches these three indexes in this order, and here's the cost estimate."

That's what the dual engine does for code intelligence. Graphify tells you the shape. GitNexus tells you the plan.

Try It

If you read the last post and built graphify graphs, adding GitNexus takes 60 seconds:

# Install

npm install -g gitnexus

# Index your repo (from the repo root)

gitnexus analyze

# Add to Claude Code as MCP server

claude mcp add gitnexus -- npx -y gitnexus@latest mcp

# That's it. Restart Claude Code.If you want the dual-engine routing, add this to your CLAUDE.md:

## Knowledge Graph Access

- Code questions (symbol/function/blast radius): `gitnexus context <Symbol> --repo <name>`

- Execution flow search: `gitnexus query "<concept>" --repo <name>`

- Blast radius: `gitnexus impact <Symbol> --repo <name>`

- Semantic/cross-layer: `graph-ask <graph> "<concept>"`

- Decision: GitNexus first for code. Graphify for everything else. Grep as last resort.When the Engines Disagree

The dual-engine design has an honest gap: no conflict resolution layer. When GitNexus and graphify return different answers for the same symbol, the developer's intuition is the tiebreaker.

A real example from my codebase: I queried StorageService on both engines.

- GitNexus found it in

app/services/storage_service.pywith 3 incoming references —get_storage_service()calls it,data_export_service.pyanddocuments.pyimport it. Accurate. - Graphify had

StorageServiceas an orphan node with zero edges in community 6. The LLM pass missed every connection — probably becauseStorageServiceuses a factory pattern (get_storage_service()) that doesn't look like a direct import.

The fix wasn't algorithmic — it was knowing which engine to trust for which question. GitNexus wins on structural queries (who imports what, who calls what). Graphify wins on semantic queries (which concepts belong together, what's the architecture boundary). When they disagree on a structural question, GitNexus is right. When they disagree on a semantic grouping, graphify is right.

I'd love to say I built a clever merge layer. I didn't. The routing decision tree is the conflict resolution — you don't ask both engines the same question. You ask the right engine for the question type.

What's Next

The graph is static between commits. Update: I've since wired a PostToolUse hook that auto-reindexes the repo in the background after every git commit. The graph is now never more than one commit stale. The MCP server also connects directly using the absolute node binary path (bypassing npx startup latency and #!/usr/bin/env PATH issues), so it starts in under a second.

Beyond that: cross-repo unified graphs. Right now each repo is a separate index. A gitnexus query "document encryption" can search one repo at a time, but not both in one query. Unifying them would let the dual engine trace a frontend button click through the API boundary into the backend service layer in a single query. GitNexus's enterprise tier supports this; the open-source version doesn't yet.

But even without those — 11,237 nodes, zero file reads, 9 execution flows traced in 2 MCP tool calls. The map keeps getting more detailed, and the cost of reading it keeps dropping.

This is post 12 of my daily context series. Previous: ANALYZE for Codebases. DM me if you want to see the dual-engine graph integration code.

Related posts

- 1.ANALYZE for Codebases: Giving Claude Code a Persistent Memory of Your Repo2026-04-11 · 11 min

- 2.I Built a CLAUDE.md Linter in One Session. Here's What I Found in 773 Sessions of Context Files.2026-04-04 · 6 min

- 3.My Claude Code Setup: 7 MCP Servers, Custom Hooks, and an AI That Tweets For Me2026-03-31 · 7 min